CLIP-SVD: Efficient and Interpretable Vision-Language Adaptation via Singular Values

TMLR (2026).

TMLR (2026).

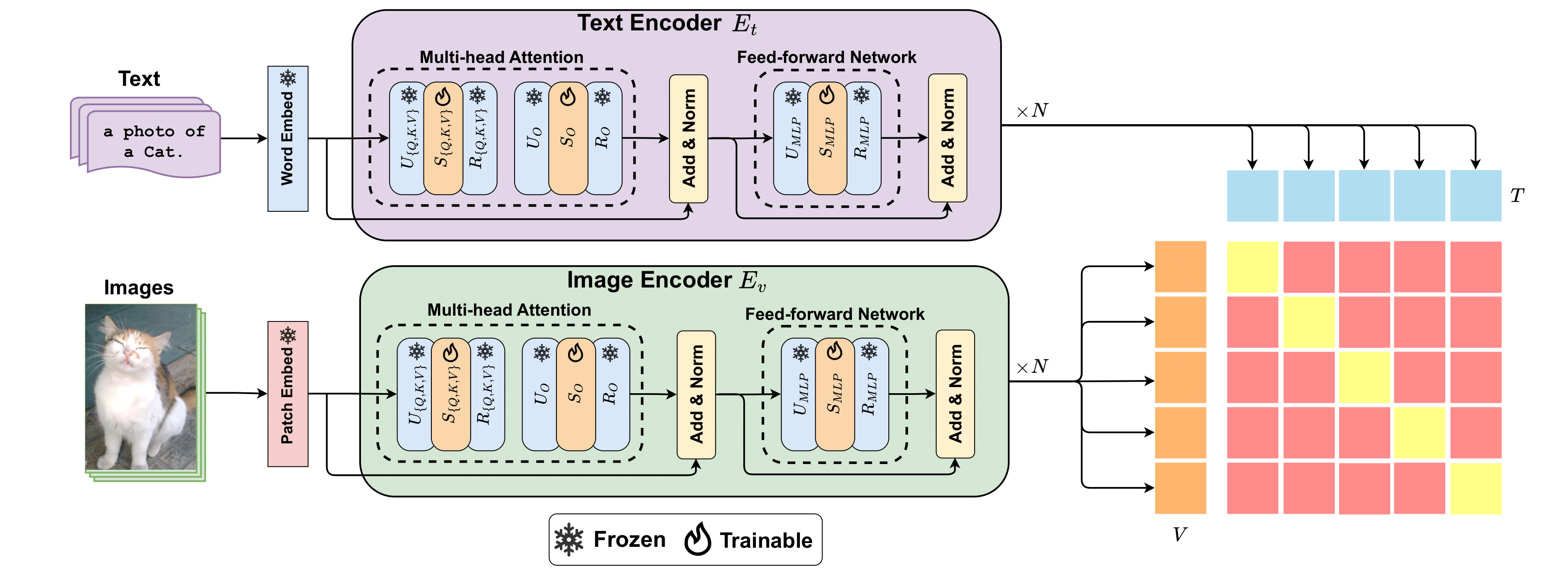

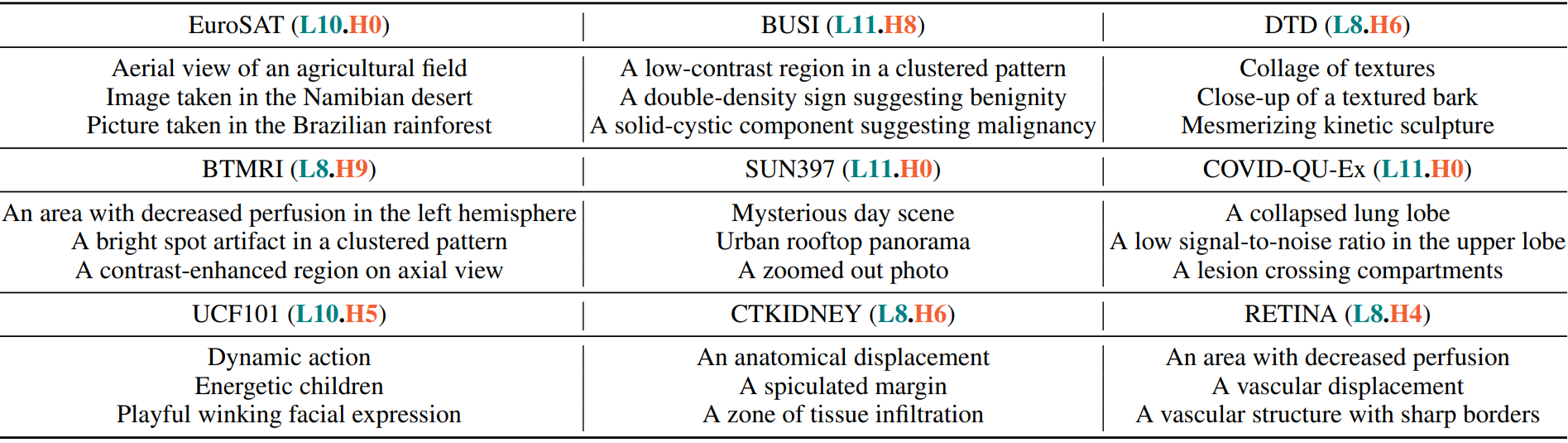

Abstract: Vision-language models (VLMs) like CLIP have shown impressive zero-shot and few-shot learning capabilities across diverse applications. However, adapting these models to new fine-grained domains remains difficult due to reliance on prompt engineering and the high cost of full model fine-tuning. Existing adaptation approaches rely on augmented components, such as prompt tokens and adapter modules, which could limit adaptation quality, destabilize the model, and compromise the rich knowledge learned during pretraining. In this work, we present CLIP-SVD, a multi-modal and parameter-efficient adaptation framework that applies Singular Value Fine-tuning (SVF) to CLIP, leveraging Singular Value Decomposition (SVD) to modify the internal parameter space of CLIP without injecting additional modules. Specifically, we fine-tune only the singular values of the CLIP parameter matrices to rescale the basis vectors for domain adaptation while retaining the pretrained model. This design enables enhanced adaptation performance using only 0.04% of the model's total parameters and better preservation of its generalization ability. CLIP-SVD achieves state-of-the-art classification results on 11 natural and 10 biomedical datasets, outperforming previous methods in both accuracy and generalization under few-shot settings. Additionally, we leverage a natural language-based approach to analyze the effectiveness and dynamics of the CLIP adaptation to allow interpretability of CLIP-SVD. Overall, this work provides the first extensive empirical evaluation of SVD-based finetuning in the vision-language model setting.

| Method | K = 1 | K = 2 | K = 4 | K = 8 | K = 16 |

|---|---|---|---|---|---|

| Zero-shot CLIP | – | – | 65.36 | – | – |

| CoOp | 68.09 | 70.13 | 73.59 | 76.45 | 79.01 |

| MaPLe | 69.27 | 72.58 | 75.37 | 78.89 | 81.79 |

| CLIP-LoRA | 72.20 | 75.41 | 77.32 | 80.10 | 82.89 |

| CLIP-SVD (Ours) | 73.20 | 76.06 | 78.18 | 80.55 | 82.97 |

| Method | K = 1 | K = 2 | K = 4 | K = 8 | K = 16 |

|---|---|---|---|---|---|

| Zero-shot BiomedCLIP | – | – | 42.38 | – | – |

| CoOp | 52.59 | 55.71 | 61.35 | 67.74 | 71.48 |

| BiomedCoOp | 56.87 | 59.32 | 64.34 | 68.96 | 73.41 |

| CLIP-SVD (Ours) | 56.35 | 62.63 | 68.02 | 73.26 | 76.46 |

| Method | Base | Novel | HM |

|---|---|---|---|

| CLIP | 69.34 | 74.22 | 71.70 |

| CoOp | 82.69 | 63.22 | 71.66 |

| CoCoOp | 80.47 | 71.69 | 75.83 |

| KgCoOp | 80.73 | 73.60 | 77.00 |

| ProGrad | 82.48 | 70.75 | 76.16 |

| MaPLe | 82.28 | 75.14 | 78.55 |

| IVLP | 84.21 | 71.79 | 77.51 |

| GDA | 83.96 | 74.53 | 78.72 |

| TCP | 84.13 | 75.36 | 79.51 |

| CLIP-LoRA | 84.10 | 74.80 | 79.18 |

| CLIP-SVD (Ours) | 84.38 | 76.29 | 80.13 |

| Method | Base | Novel | HM |

|---|---|---|---|

| BiomedCLIP | 49.27 | 67.17 | 55.23 |

| CoOp | 76.71 | 65.34 | 68.80 |

| CoCoOp | 75.52 | 67.74 | 69.11 |

| KgCoOp | 71.90 | 65.94 | 67.22 |

| ProGrad | 75.69 | 67.33 | 69.86 |

| MaPLe | 65.40 | 49.51 | 53.10 |

| XCoOp | 74.62 | 63.19 | 68.43 |

| BiomedCoOp | 78.60 | 73.90 | 74.04 |

| GDA | 57.70 | 64.66 | 60.98 |

| DCPL | 73.70 | 69.35 | 71.46 |

| CLIP-LoRA | 70.56 | 59.84 | 64.76 |

| CLIP-SVD (Ours) | 82.64 | 74.31 | 78.25 |

CLIP-SVD enables a principled analysis of adaptation dynamics by inspecting ranked singular value updates and mapping them to natural language concepts. This facilitates interpretation of which semantic dimensions are amplified or suppressed during few-shot learning, while preserving the pretrained representation structure.

@article{koleilat2026clipsvd,

title={{CLIP}-{SVD}: Efficient and Interpretable Vision{\textendash}Language Adaptation via Singular Values},

author={Taha Koleilat and Hassan Rivaz and Yiming Xiao},

journal={Transactions on Machine Learning Research},

issn={2835-8856},

year={2026},

url={https://openreview.net/forum?id=XYy8pwqwMR}

}